Die Sigg es noch nit övversatz. Ehr luurt üch de änglesche Originalversion aan.

Sample-based Quantum Diagonalization (SQD)

Sample-based quantum diagonalization (SQD) combines classical linear algebra and the power of quantum computing to diagonalize a Hamiltonian (matrix) and compute its eigenvalues and eigenvectors. Matrix diagonalization is an important mathematical operation as many problems in science, computation and optimization use the method.

The video below gives an overview of SQD, what determines its usefulness, and what makes it faster than many other approaches. The subsequent text gives more details.

1. Introduction and motivation

Consider the energy eigenvalue equation made famous by Schrödinger, as an example.

is the Hamiltonian of a system, is the wave function (also known as the eigenstate), and is an eigenvalue. The eigenvalues of matrix represent the energy levels of the system. For example, if the system is a molecule, the lowest eigenvalue represents the ground state energy of the molecule. In many problems, we are interested in ground state energy estimation.

By applying exact diagonalization techniques from linear algebra, we can diagonalize the full matrix. However, the approach becomes computationally expensive (even impossible) as the matrix gets larger. For example, even for small chemical molecules, can be prohibitively large (for example, Hamiltonian for the molecule with a cc-PVDZ basis has a dimension of

Fortunately, we do not always need all the eigenvalues and eigenvectors of a Hamiltonian , and therefore, diagonalization of the full matrix is not required in many practical cases. For example, in case of ground state estimation, we are interested in the lowest eigenvalue and the corresponding eigenvector. This allows us to apply the concept of projection onto a (useful) subspace.

Consider an matrix, , where the complete vector space (Hilbert space) has a dimension of ( is large). Next, we select a subspace () - which is a subset of the full Hilbert space - of dimension , where is sufficiently small. After projecting onto this subspace, the projected matrix (say, ) will be smaller (). The smaller can be diagonalized using a suitable classical numerical method, and generate eigenvalues and eigenvectors for that subspace.

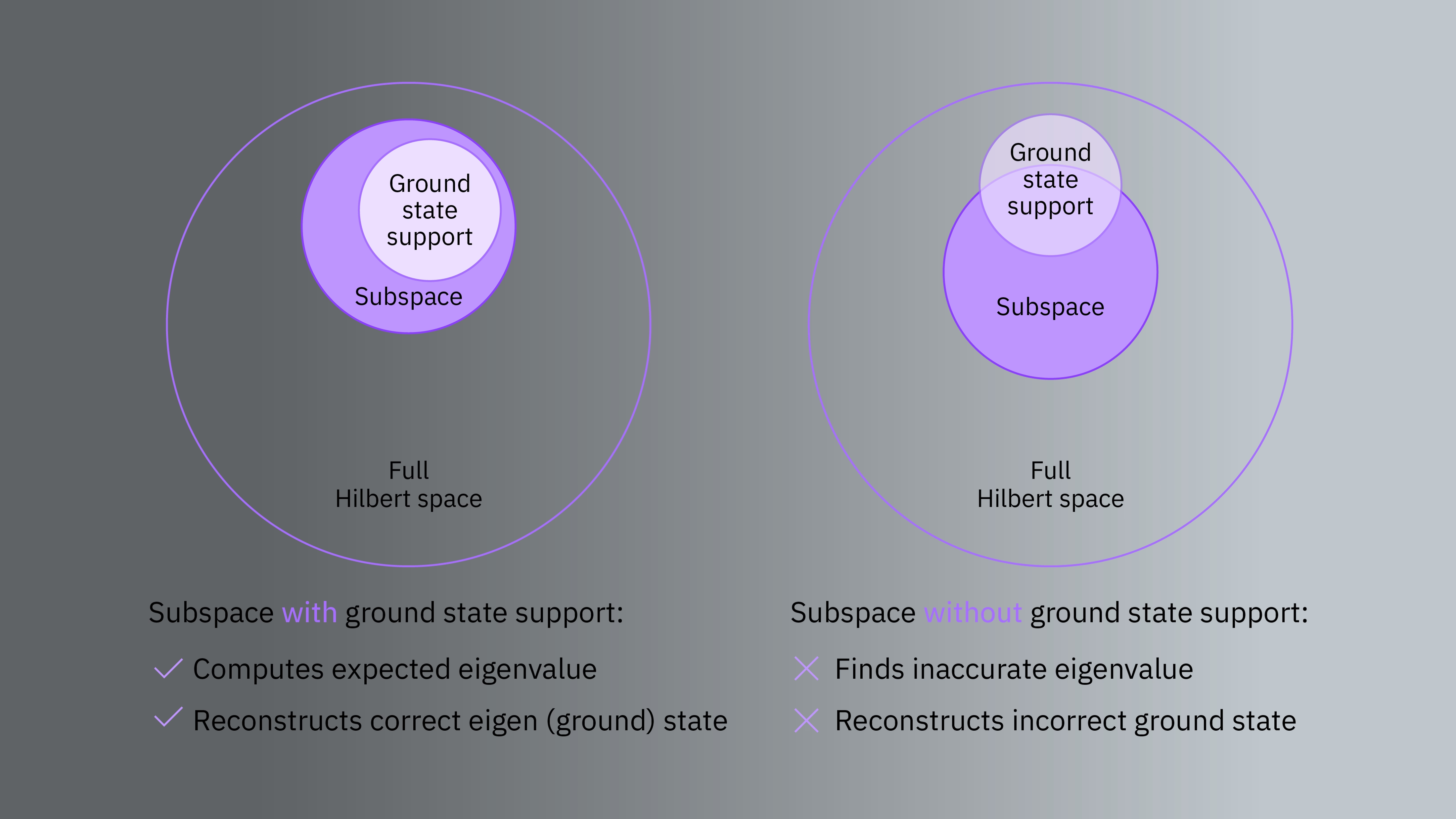

Note that the subspace must be in support of our target (for example, ground) eigenstate. In other words, the projected Hamiltonian must be in a subspace that includes the lowest eigenvalue.

2. Projection and Diagonalization

Consider we want to find the lowest eigenvalue and the corresponding eigenvector for the following Hamiltonian matrix .

We will diagonalize the full matrix along with different projected versions () for different subspaces to demonstrate the scalability and importance of the choice of subspace.

The ground state energy (minimum eigenvalue) of the matrix is and the exact ground state wave function (eigenvector) is:

that is, the ground state of the matrix is spanned by two computational basis states (vectors) and .

# Added by doQumentation — required packages for this notebook

!pip install -q numpy scipy

import numpy as np

from scipy.linalg import eigh

np.set_printoptions(precision=4, sign="-", suppress=True, linewidth=100)

H = np.array(

[

[0.2235, -0.039, -0.1035, -0.0818, 0.1746, 0.1091, 0.1165, -0.0104],

[-0.0390, 0.6621, 0.0706, -0.1964, -0.0782, 0.2619, 0.1095, 0.0029],

[-0.1035, 0.0706, 0.9961, 0.1724, 0.1067, -0.2299, -0.1817, 0.1571],

[-0.0818, -0.1964, 0.1724, -0.1773, 0.1019, -0.4778, -0.1272, -0.0414],

[0.1746, -0.0782, 0.1067, 0.1019, 0.1418, -0.1359, -0.1793, -0.0766],

[0.1091, 0.2619, -0.2299, -0.4778, -0.1359, 0.1014, 0.1696, 0.0552],

[0.1165, 0.1095, -0.1817, -0.1272, -0.1793, 0.1696, 0.4227, 0.2702],

[-0.0104, 0.0029, 0.1571, -0.0414, -0.0766, 0.0552, 0.2702, 0.4456],

]

)

eigvals, eigvecs = eigh(H)

print("Eigenvalues:")

print(eigvals)

print(f"Minimum eigenvalue: {eigvals.min()}")

print("\nEigenvectors (columns represent vectors):")

print(eigvecs)

print("\nEigenvector for the minimum eigenvalue (ground state)")

print(eigvecs[:, np.argmin(eigvals)])

Eigenvalues:

[-0.5357 -0.1321 0.1049 0.1258 0.3616 0.6405 0.947 1.3039]

Minimum eigenvalue: -0.5356560029438817

Eigenvectors (columns represent vectors):

[[-0. -0.5612 0.098 -0.0024 0.8051 -0.0806 0.0643 0.1288]

[-0. -0.1403 -0.1985 -0.4249 -0.0092 0.585 -0.5952 0.2526]

[ 0. 0.0416 0.3041 0.2122 0.1509 -0.0139 -0.5794 -0.7086]

[ 0.8 -0.1936 -0.0127 -0.4376 -0.1081 -0.0838 0.1557 -0.2966]

[ 0. 0.6716 -0.3535 -0.2552 0.5395 0.0954 0.1449 -0.1941]

[ 0.6 0.258 0.017 0.5834 0.1441 0.1118 -0.2076 0.3954]

[ 0. 0.3088 0.5504 -0.4197 0.0626 -0.468 -0.2625 0.3657]

[-0. -0.1146 -0.6559 0.0356 -0.0394 -0.6352 -0.3856 0.0418]]

Eigenvector for the minimum eigenvalue (ground state)

[-0. -0. 0. 0.8 0. 0.6 0. -0. ]

Next, we will project the matrix onto different subspaces and check if we can get the exact ground state. In particular, we will project the matrix onto a subspace spanned by:

- exact ground state vectors ( and ).

- vectors that excludes some or all exact ground state vectors (for example, , , and ).

- vectors that include both exact ground state and out-of-ground state (but not all possible vectors in the Hilbert space).

2.1 Case-1: Subspace includes ground state

Suppose we want to project in a subspace () spanned by two vectors and . The projected Hamiltonian is defined by:

x1 = np.zeros(8)

x1[3] = 1 # binary 011 is 3 in decimal. |011> = |3> = [0,0,0,1,0,0,0,0]

x2 = np.zeros(8)

x2[5] = 1 # binary 101 is 5 in decimal

Hs = np.array([[x1 @ H @ x1.T, x1 @ H @ x2.T], [x2 @ H @ x1.T, x2 @ H @ x2.T]])

print(Hs)

[[-0.1773 -0.4778]

[-0.4778 0.1014]]

eigvals, eigvecs = eigh(Hs)

print(f"Minimum eigenvalue: {eigvals.min()}")

print(f"Eigenvector for minimum eigenvalue: {eigvecs[:,np.argmin(eigvals)]}")

Minimum eigenvalue: -0.535656000064295

Eigenvector for minimum eigenvalue: [-0.8 -0.6]

We can make several key observations here.

- As we spanned the subspace with two vectors, the dimension of the projected matrix () is , which is smaller than the full matrix ().

- The minimum eigenvalue of the projected matrix matches the exact ground state's eigenvalue.

- The values in the

eigvecsvariable denotes the amplitude of subspace spanning vectors, and using them we can reconstruct the eigenstate (ground state). In this case, we end with the exact ground state (up to a global phase):

2.2 Case-2: Subspace excludes some or all ground state vectors

Next, we project onto a subspace spanned by three vectors , , and . We deliberately choose the vectors such that it excludes a ground state vector (). The projected Hamiltonian is defined by:

x1 = np.zeros(8)

x1[0] = 1

x2 = np.zeros(8)

x2[3] = 1

x3 = np.zeros(8)

x3[6] = 1

Hs = np.array(

[

[x1 @ H @ x1.T, x1 @ H @ x2.T, x1 @ H @ x3.T],

[x2 @ H @ x1.T, x2 @ H @ x2.T, x2 @ H @ x3.T],

[x3 @ H @ x1.T, x3 @ H @ x2.T, x3 @ H @ x3.T],

]

)

print(Hs)

[[ 0.2235 -0.0818 0.1165]

[-0.0818 -0.1773 -0.1272]

[ 0.1165 -0.1272 0.4227]]

eigvals, eigvecs = eigh(Hs)

print(f"Minimum eigenvalue: {eigvals.min()}")

Minimum eigenvalue: -0.21108858736702252

The eigenvalue in this case does not match the minimum eigenvalue of the full Hamiltonian. The key observation here is: if we project onto a subspace that excludes basis states in our target (ground) state - either partially or completely - the estimated ground state will be different than the exact one.

2.3 Case-3: Subspace includes both ground state and non-ground state vectors

Next, we show a case where the subspace is spanned by vectors that include exact ground state vectors along with unwanted vectors. Suppose our subspace is spanned by , (present in the exact ground state), and (absent in the exact ground state).

x1 = np.zeros(8)

x1[3] = 1

x2 = np.zeros(8)

x2[5] = 1

x3 = np.zeros(8)

x3[7] = 1

Hs = np.array(

[

[x1 @ H @ x1.T, x1 @ H @ x2.T, x1 @ H @ x3.T],

[x2 @ H @ x1.T, x2 @ H @ x2.T, x2 @ H @ x3.T],

[x3 @ H @ x1.T, x3 @ H @ x2.T, x3 @ H @ x3.T],

]

)

print(Hs)

[[-0.1773 -0.4778 -0.0414]

[-0.4778 0.1014 0.0552]

[-0.0414 0.0552 0.4456]]

eigvals, eigvecs = eigh(Hs)

print(f"Minimum eigenvalue: {eigvals.min()}")

print(f"Eigenvector for minimum eigenvalue: {eigvecs[:,np.argmin(eigvals)]}")

Minimum eigenvalue: -0.53565600006461

Eigenvector for minimum eigenvalue: [ 0.8 0.6 -0. ]

In this case, we again get as the minimum eigenvalue which matches with the full matrix (that is, the exact ground state). Another interesting result is the amplitude of returned by the projection and diagonalization process. The amplitude is , and when we reconstruct the wave function (eigenstate) with computed amplitudes and vectors, we get:

Thus, even if our subspace includes some non-target vectors (along with the full set of target vectors), we can compute the correct eigenvalue and eigenstate as the projection and diagonalization process filters out non-target vectors by setting their amplitudes to . This property of SQD provides an inherent noise tolerance.

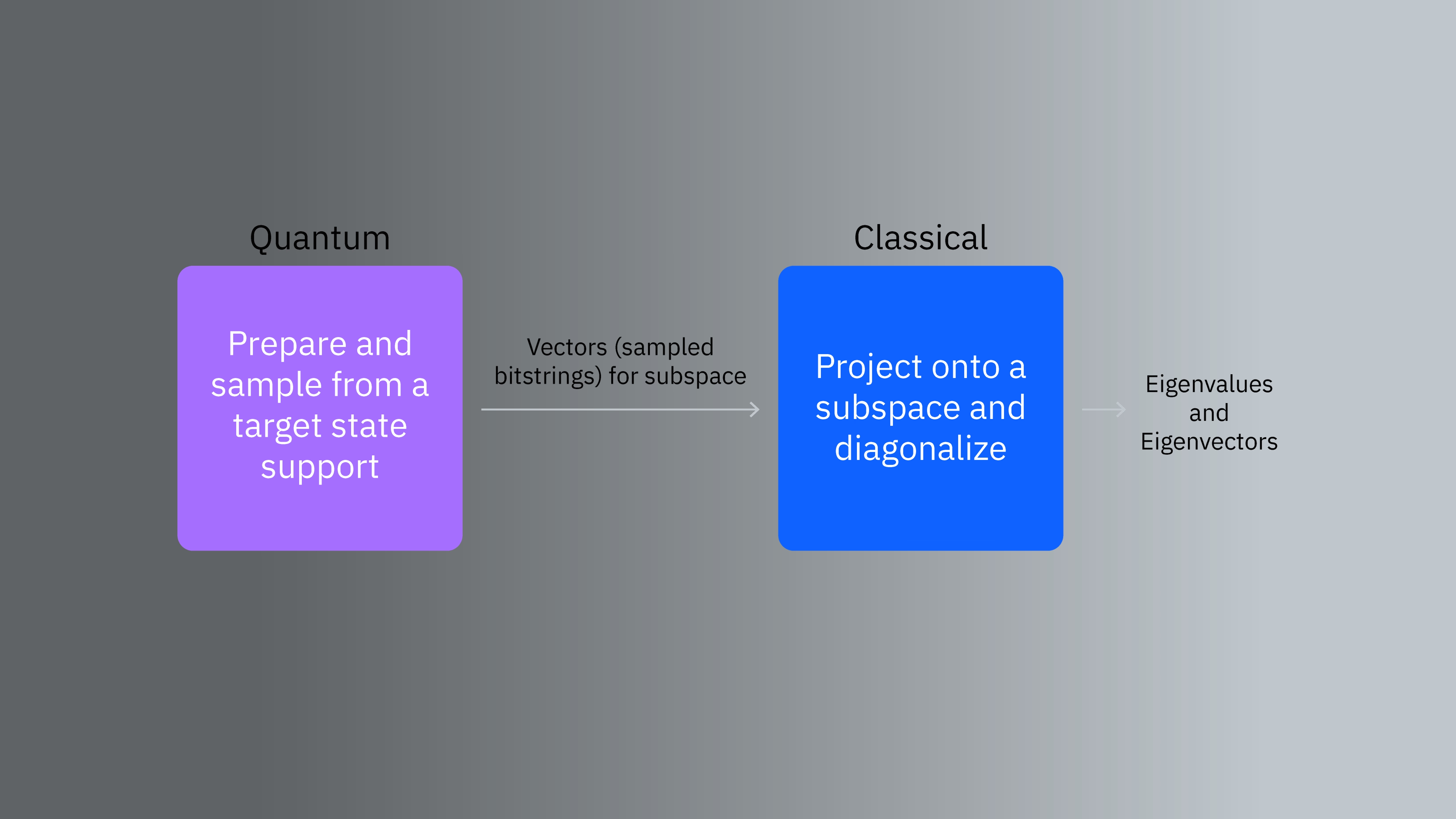

3. Role of quantum in SQD

Above analyses establish the importance of subspace spanning vectors, which must be in support of the target state. This raises an important question: How do we choose vectors with target state support for subspace construction?

This is where quantum computers come into play. The quantum-classical synergy works as follows in the SQD paradigm:

- Using a suitable quantum circuit, we try to prepare a state on a quantum computer that will generate basis states on which the target wave function (for example, ground state) has significant support. The sampled basis states (bitstrings) will span the subspace for Hamiltonian projection.

- A classical computer projects the Hamiltonian on to the subspace (spanned by samples/vectors from the quantum computer) and diagonalizes it to compute eigenvalues and eigenvectors using suitable numerical methods.

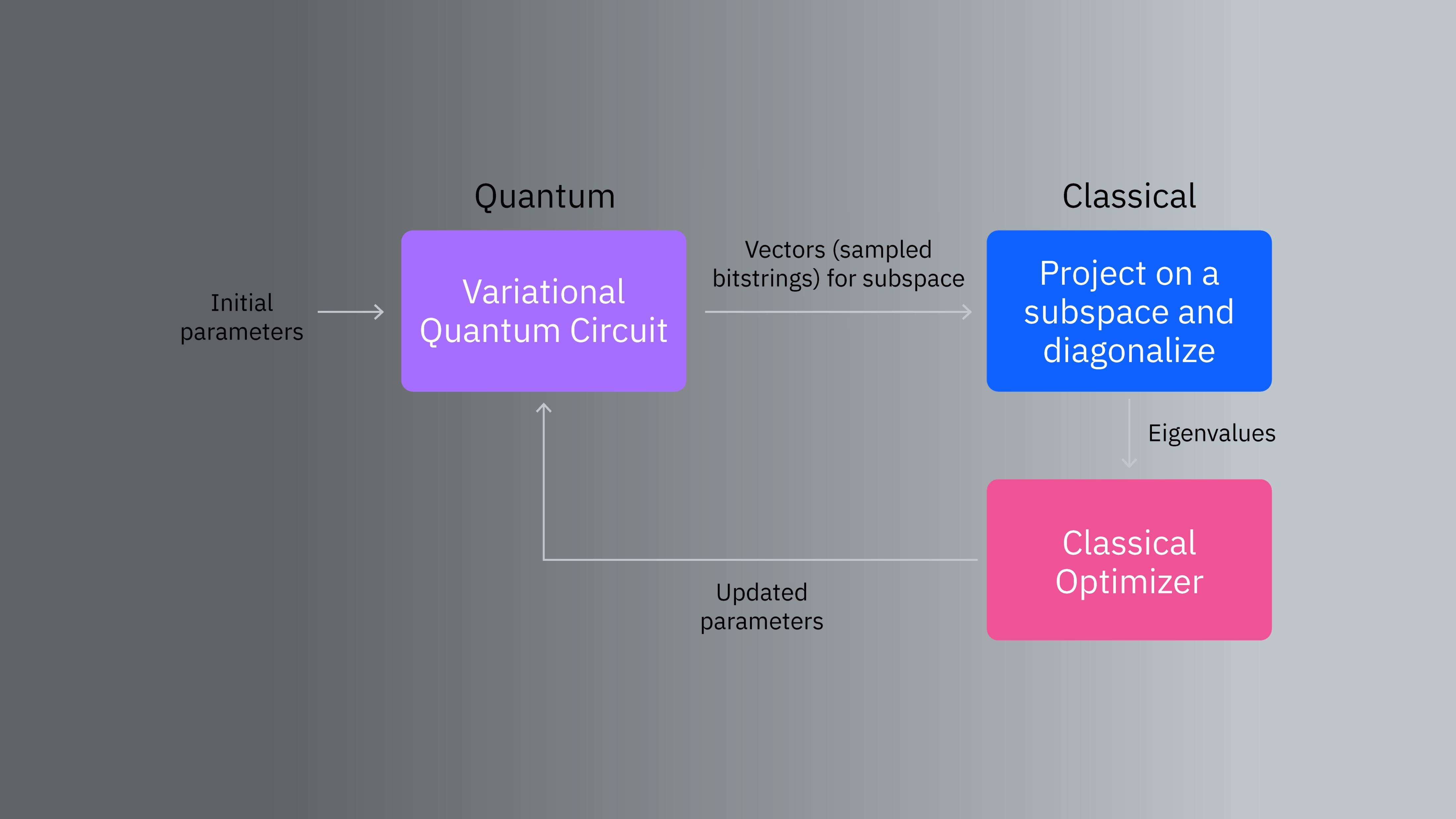

There can be several ways we can prepare such a quantum state, and they can be variational or non-variational depending on the problem.

There can be several ways we can prepare such a quantum state, and they can be variational or non-variational depending on the problem.

In next two lessons, we will show two specific examples of preparing states and sampling from them.

- In Lesson 4, we will use a parameterized local unitary coupled Jastrow (LUCJ) ansatz to generate samples for a chemistry problem (ground state energy estimation of molecule). We will initialize the LUCJ ansatz with parameters from classical coupled cluster singles and doubles (CCSD) computation.

- In Lesson 5, we will sample from Krylov basis states to span the subspace for a condensed matter physics problem. This approach is non-variational in nature.

Besides the problem-specific approaches above, a generic approach for state preparation involves a variational ansatz, where we will iteratively update ansatz parameters using a classical optimizer.

Samples from pre-fault tolerant quantum computers can be noisy. SQD employs a self-consistent configuration recovery process to correct noisy samples [1]. We will discuss the configuration recovery process in more detail and apply it for correcting noisy samples iteratively to refine the ground state energy estimation for an chemistry problem in Lesson 4.

Samples from pre-fault tolerant quantum computers can be noisy. SQD employs a self-consistent configuration recovery process to correct noisy samples [1]. We will discuss the configuration recovery process in more detail and apply it for correcting noisy samples iteratively to refine the ground state energy estimation for an chemistry problem in Lesson 4.

3.1 Notes on ground state support

Let us explain the concept of ground state support more. Ground state support can be defined as the set of basis states where ground state has non-zero amplitude (up to a cutoff threshold).

Suppose the exact ground state of a -qubit problem is

If we sample the above state, we should get a set of computational basis states , (other computational basis states have zero amplitude in the ground state, and therefore, ideally will not appear during sampling).

Ideally, the set of basis vectors for this states consists of (in other words, the subspace of this state is spanned by these two basis vectors).

In practice, we do not need to prepare the exact ground state as sampling many other states can give us the same set of vectors. For example:

Preparing and sampling from any of the above states will generate vectors that have non-zero amplitude in the ground state, and all of them qualify as to have ground state support. Note that, sampling includes one extra vector which has amplitude in the exact ground state. However, earlier we have shown that including such vectors in the subspace is not problematic as the projection and diagonalization operation sets the amplitude of unwanted vectors to , and we can get expected eigenvalue and reconstruct the correct eigenstate.

Thus, preparing and sampling from the exact ground state is not necessary. In fact, doing so can be difficult as the exact ground state is not known a priori, and it is often beneficial to not prepare and sample from the exact ground state, especially if the wave function (state) is skewed with some basis states having very high probabilities. Consider the following wave function:

This is a skewed wave function where basis states and have much larger amplitudes compared to and . When sampled, we will get and more frequently ( for and each, for , and for ). With finite sampling budget (shots), it is highly likely that our sampled set only contains and . As shown earlier, if we span the subspace with such a set with missing vectors, we will not be able to find the true minimum eigenvalue. Therefore, it will be beneficial (and necessary) to sample from a state with ground state support.

3.2 A case against uniform sampling

It can be tempting to draw samples from a uniform distribution to span the subspace. While it may work for small problems, it will start to fail for larger and more practical problems. For large problems with many qubits, the Hilbert space can be prohibitively large. For example, a 32-qubit Hilbert space has more than billion possible basis vectors (). If we uniformly sample from that space with finite sample budget (say vectors to keep the diagonalization process feasible), the subspace may exclude vectors with ground state support more often as the process will be random. Therefore, we need a systematic way to sample from ground state support leveraging quantum circuits.

4. SQD and Sparsity of the wave function

The gap between full Hilbert space and feasible subspace dimensions, brings another important aspect of SQD, and that is the sparsity of the wave function. The SQD approach works well for sparse or concentrated wave functions where a small fraction of basis states has non-negligible amplitudes. There are two reasons behind it:

- If the wave function is broad (that is, many basis states have non-negligible amplitudes), and we miss out including vectors with target state support in the subspace, we may end up with incorrect eigenvalues and eigenvectors.

- To avoid the above issue, we need to include many vectors in the subspace. However, the dimension of the projected Hamiltonian is directly related to the subspace dimension. A larger subspace will mean larger Hamiltonian, which may become infeasible to diagonalize.

We showcase the issue with the following matrix (). The lowest eigenvalue of the is , and the corresponding wave function (eigenstate) is broad:

H_new = np.array(

[

[-0.958, 0.1853, -0.2663, -0.3875, -0.0524, -0.3779, -0.0145, -0.3369],

[0.1853, -0.4081, -0.8549, -0.2312, 0.0615, -0.2493, -0.3804, -0.3312],

[-0.2663, -0.8549, -0.6929, -0.0063, -0.0478, -0.0236, -0.2494, -0.0669],

[-0.3875, -0.2312, -0.0063, -0.4468, -0.6301, -0.4627, -0.1188, 0.0753],

[-0.0524, 0.0615, -0.0478, -0.6301, -0.6664, -0.1514, -0.3571, -0.3644],

[-0.3779, -0.2493, -0.0236, -0.4627, -0.1514, -0.9605, 0.0137, 0.0035],

[-0.0145, -0.3804, -0.2494, -0.1188, -0.3571, 0.0137, -1.1449, 0.0433],

[-0.3369, -0.3312, -0.0669, 0.0753, -0.3644, 0.0035, 0.0433, -1.2307],

]

)

eigvals, eigvecs = eigh(H_new)

print(f"Minimum eigenvalue: {eigvals.min()}")

print(f"Eigenvector for minimum eigenvalue: {eigvecs[:,np.argmin(eigvals)]}")

Minimum eigenvalue: -2.208137504726661

Eigenvector for minimum eigenvalue: [0.3536 0.3536 0.3536 0.3536 0.3535 0.3536 0.3535 0.3535]

Suppose we project the onto a subspace spanned by four vectors: , , , and and compute the eigenvalue.

x1 = np.zeros(8)

x1[0] = 1

x2 = np.zeros(8)

x2[2] = 1

x3 = np.zeros(8)

x3[5] = 1

x4 = np.zeros(8)

x4[6] = 1

H_new_s = np.array(

[

[x1 @ H_new @ x1.T, x1 @ H_new @ x2.T, x1 @ H_new @ x3.T, x1 @ H_new @ x4.T],

[x2 @ H_new @ x1.T, x2 @ H_new @ x2.T, x2 @ H_new @ x3.T, x2 @ H_new @ x4.T],

[x3 @ H_new @ x1.T, x3 @ H_new @ x2.T, x3 @ H_new @ x3.T, x3 @ H_new @ x4.T],

[x4 @ H_new @ x1.T, x4 @ H_new @ x2.T, x4 @ H_new @ x3.T, x4 @ H_new @ x4.T],

]

)

print(H_new_s)

[[-0.958 -0.2663 -0.3779 -0.0145]

[-0.2663 -0.6929 -0.0236 -0.2494]

[-0.3779 -0.0236 -0.9605 0.0137]

[-0.0145 -0.2494 0.0137 -1.1449]]

eigvals, eigvecs = eigh(H_new_s)

print(f"Minimum eigenvalue: {eigvals.min()}")

Minimum eigenvalue: -1.4266552340586673

The above example shows that when the wave function is broad, and we do not include basis states in the subspace, the eigenvalue computation becomes incorrect.

5. SQD vs. VQE

As noted earlier, SQD may need a variational quantum circuit and iterative parameter updates to prepare and sample from ground state support. As this iterative parameter update routine is similar to VQE, one can ask how these methods are different, and what are the advantages of SQD over VQE? In this section, we compare the methods and discuss advantages of SQD with a molecule described with minimal basis set (sto-3g) as an example.

| VQE | SQD | |

|---|---|---|

| Measurement overhead | Many Pauli terms, many measurement circuits: The Hamiltonian for the molecule has unique Pauli terms. As the Pauli terms can contain and terms, and typical quantum measurements are done in the -basis, we need measurement basis change to evaluate those terms. When optimized for measurements, the terms can be grouped into groups, where each group can be evaluated using a single circuit. Thus, we need at least unique circuits to evaluate all Pauli terms. Many shots per circuit for tighter variance. Again, the evaluated expectation value of each Pauli term has a variance attached to them which depends inversely on the . Therefore, to accurately estimate each term, we need to allocate many shots per circuit. For example, to achieve chemical accuracy ( kcal/mol), typically we need shots in the order of - per circuit. Thus, VQE needs many measurement circuits and each circuit with certain number of shots. For practical cases, this measurement overhead can be restrictive. | In SQD, we do not need different measurement circuits for each grouped Pauli terms. Typically, we measure a single circuit for a fixed number of shots. While we may set the number of shots to a large value depending on the problem, the overhead remains much smaller than VQE. Also, the energy estimations using diagonalization process are exact, meaning the computed eigenvalues are exact in that subspace and does not have a variance attached to them like VQE. (In case of Krylov basis state sampling (Lesson 5), we need to measure multiple circuits, but the number circuit remains much smaller than VQE). |

| Estimated energy bound | In VQE, energy estimations are not bounded and can be lower than true minimum values due to noise. | The energy estimation process in SQD always produces upper bound to ground state energy and estimated energy will never be lower than the true ground state energy. |

| Noise tolerance | VQE energy estimation is susceptible to noise from pre-fault-tolerant quantum computers. | SQD has inherent tolerance to noise. Pre-fault-tolerant quantum computers can produce noisy samples. Even if we include those samples in the subspace, the subsequent diagonalization can suppress those samples by setting their amplitudes to zero. Also, we will discuss a method called configuration recovery in relation to SQD that improves SQD noise tolerance further. |

6. Summary

- In SQD, a quantum computer generate samples and a classical computer projects a Hamiltonian onto a subspace spanned by the samples and diagonalizes it to compute eigenvalues and eigenvectors.

- The generated samples should be from the target (ground) state support.

- Depending on the problem, the quantum state preparation and sample generation flow can be iterative or non-iterative.

- SQD works best for sparse wave functions. A broad wave function will require a large subspace for accurate solutions, which makes classical projection and diagonalization operation costly.

- SQD has several advantages over VQE such as lower measurement overhead and upper bound to estimated ground state energy, which make it more scalable.

References

[1] J. Robledo-Moreno et al., "Chemistry Beyond Exact Solutions on a Quantum-Centric Supercomputer" (2024). arXiv:quant-ph/2405.05068.